Steps to run a tutorial for the Hadoop component that is integrated into DataStax

Enterprise to create and manage a portfolio of stocks. Hadoop is deprecated for use with DataStax Enterprise. DSE Hadoop

and BYOH (Bring Your Own Hadoop) are also deprecated.

Note: Hadoop is deprecated for use with DataStax Enterprise. DSE Hadoop

and BYOH (Bring Your Own Hadoop) are also deprecated.

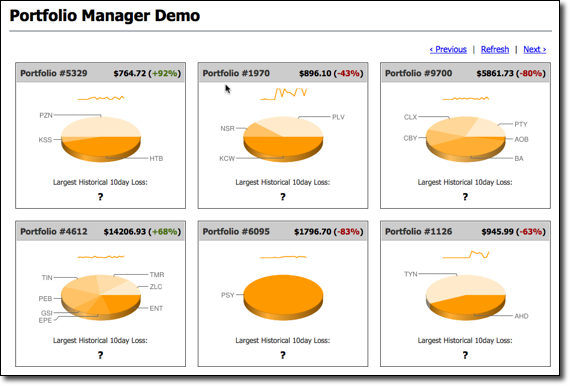

The use case is a financial

application where users can actively create and manage a portfolio of stocks. On the

Cassandra OLTP (online transaction processing) side, each portfolio contains a list

of stocks, the number of shares purchased, and the purchase price. The demo's pricer

utility simulates real-time stock data where each portfolio updates based on its

overall value and the percentage of gain or loss compared to the purchase price.

This utility also generates 100 days of historical market data (the end-of-day

price) for each stock. On the DSE OLAP (online analytical processing) side, a Hive

MapReduce job calculates the greatest historical 10 day loss period for each

portfolio, which is an indicator of the risk associated with a portfolio. This

information is then fed back into the real-time application to allow customers to

better gauge their potential losses.

Procedure

To run the demo:

Note: DataStax Demos do not work with either

LDAP or internal authorization (username/password) enabled.

-

Install a single Demo node using the DataStax Installer in GUI or Text mode with the following

settings:

- Install Options page - Default

Interface: 127.0.0.1 (You must use this IP for the

demo.)

- Node Setup page - Node Type:

Analytics

- Analytic Node Setup page - Analytics

Type: Spark + Integrated Hadoop

-

Start DataStax Enterprise if you haven't already:

- Installer-Services and Package installations:

sudo service dse start

- Installer-No Services and Tarball installations:

install_location/bin/dse cassandra -k -t ## Starts node in Spark and Hadoop mode

install_location/bin/dse cassandra -t ## Starts node in Hadoop mode

The

default install_location is

/usr/share/dse.

-

Go to the Portfolio Manager

directory.

The default location of the Portfolio Manager demo

depends on the type of installation:

| Installer-Services and Package

installations |

/usr/share/dse/demos/portfolio_manager |

| Installer-No Services and Tarball

installations |

install_location/demos/portfolio_manager |

-

Run the bin/pricer utility to generate stock data for the application:

The pricer utility takes several minutes to run.

-

Start the web service:

$ cd website

$ sudo ./start

-

Open a browser and go

to http://localhost:8983/portfolio.

The real-time Portfolio Manager demo application is displayed.

-

Open another terminal.

-

Start Hive and run the MapReduce job for the demo in Hive.

- Installer-Services:

$ dse hive -f

/usr/share/dse/demos/portfolio_manager/10_day_loss.q

- Package installations:

$ dse hive -f

/usr/share/dse-demos/portfolio_manager/10_day_loss.q

- Installer-No Services and Tarball installations:

$

install_location/bin/dse hive -f

install_location/demos/portfolio_manager/10_day_loss.q

The MapReduce job takes several minutes to run.

-

To watch the progress in the Job Tracker node, open the following URL in a

browser.

http://localhost:50030/jobtracker.jsp

-

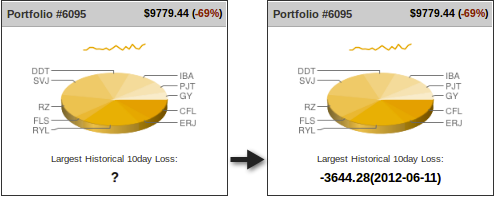

After the job completes, refresh the Portfolio Manager web

page.

The results of the Largest Historical 10 day Loss for each portfolio are

displayed.