How are read requests accomplished?

A coordinator node can send three types of read requests to a replica.

There are three types of read requests that a coordinator node can send to a replica:

- A direct read request

- A digest request

- A background read repair request

In a direct read request, the coordinator node contacts one replica node. In a digest request, the coordinator node first contacts the replicas specified by the consistency level. The coordinator node sends requests to replicas that respond the fastest. The contacted nodes respond with a digest of the requested data. If multiple nodes are contacted, the rows from each replica are compared in memory for consistency.

If any replica nodes have out-of-date data, the coordinator node sends a background read repair, which forwards the result from the replica with the most recent data (based on the timestamp) back to the client. Read repair requests ensure that the requested row is made consistent on all replicas involved in a read query.

For illustrated examples of read requests, see Examples of read consistency levels.

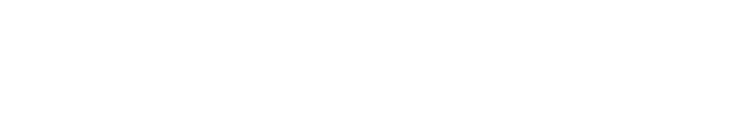

Rapid read protection using speculative_retry

When the originally selected replica nodes are down or taking too long to respond, rapid read protection allows the DataStax Distribution of Apache Cassandra™ (DDAC) to still deliver read requests. If the table has been configured with the speculative_retry property, the coordinator node for the read request will retry the request with another replica node if the original replica node exceeds a configurable timeout value to complete the read request.

Coordinator node

Coordinator node

Chosen node

Chosen node

Examples of read consistency levels

Diagrams illustrating read request examples with different consistency levels.

Rapid read protection diagram shows how the speculative retry table property affects consistency.

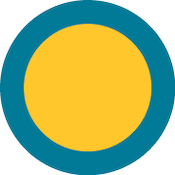

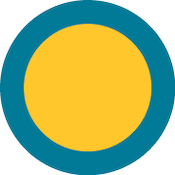

A single-datacenter cluster with a consistency level of QUORUM

In a single-datacenter cluster with a replication factor of 3 and a read consistency level

of QUORUM, 2 of the 3 replicas ((3/2)+1 = 2) for the given

row must respond to fulfill the read request. If the contacted replicas have different

versions of the row, the replica with the most recent version will return the requested

data. In the background, the third replica is checked for consistency with the first two,

and if needed, a read repair is initiated for the out-of-date replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read response

Read response

![]() Read repair

Read repair

A single-datacenter cluster with a consistency level of ONE

In a single-datacenter cluster with a replication factor of 3 and a read consistency level

of ONE, the closest replica for the given row is contacted to fulfill the

read request. Based on the read_repair_chance setting of the table, a read

repair might be initiated in the background for the other replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read response

Read response

![]() Read repair

Read repair

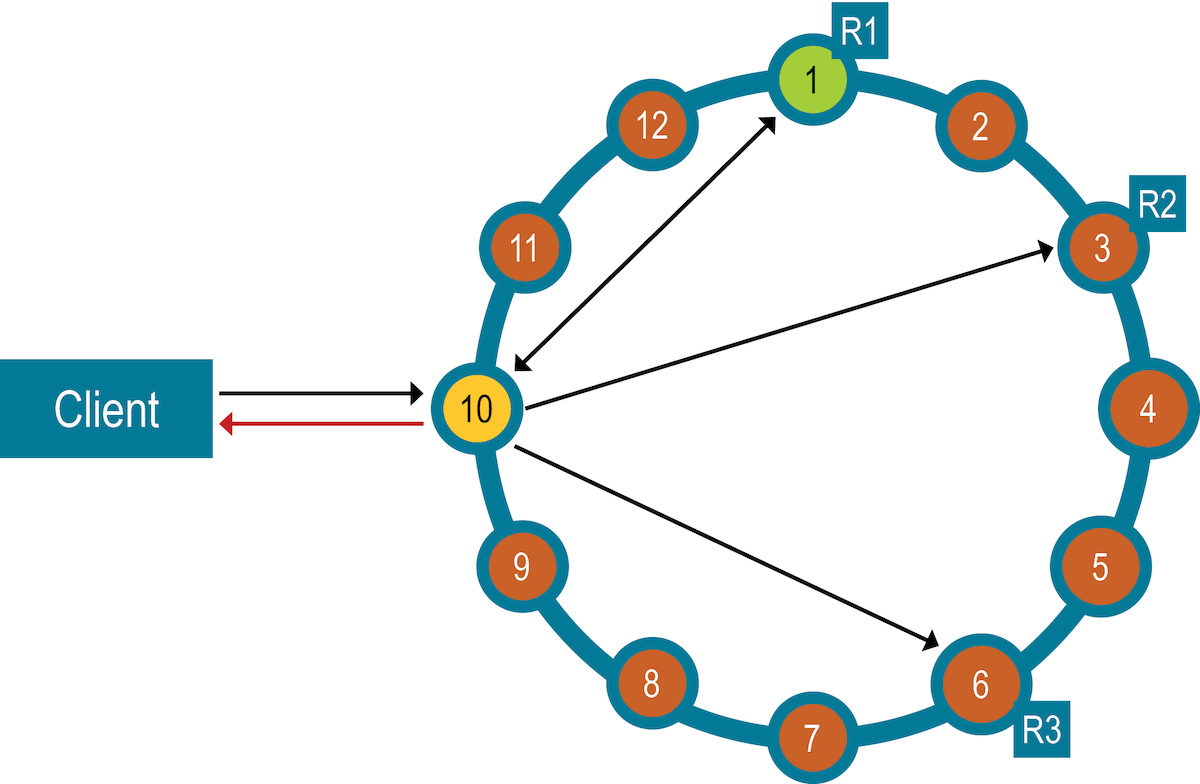

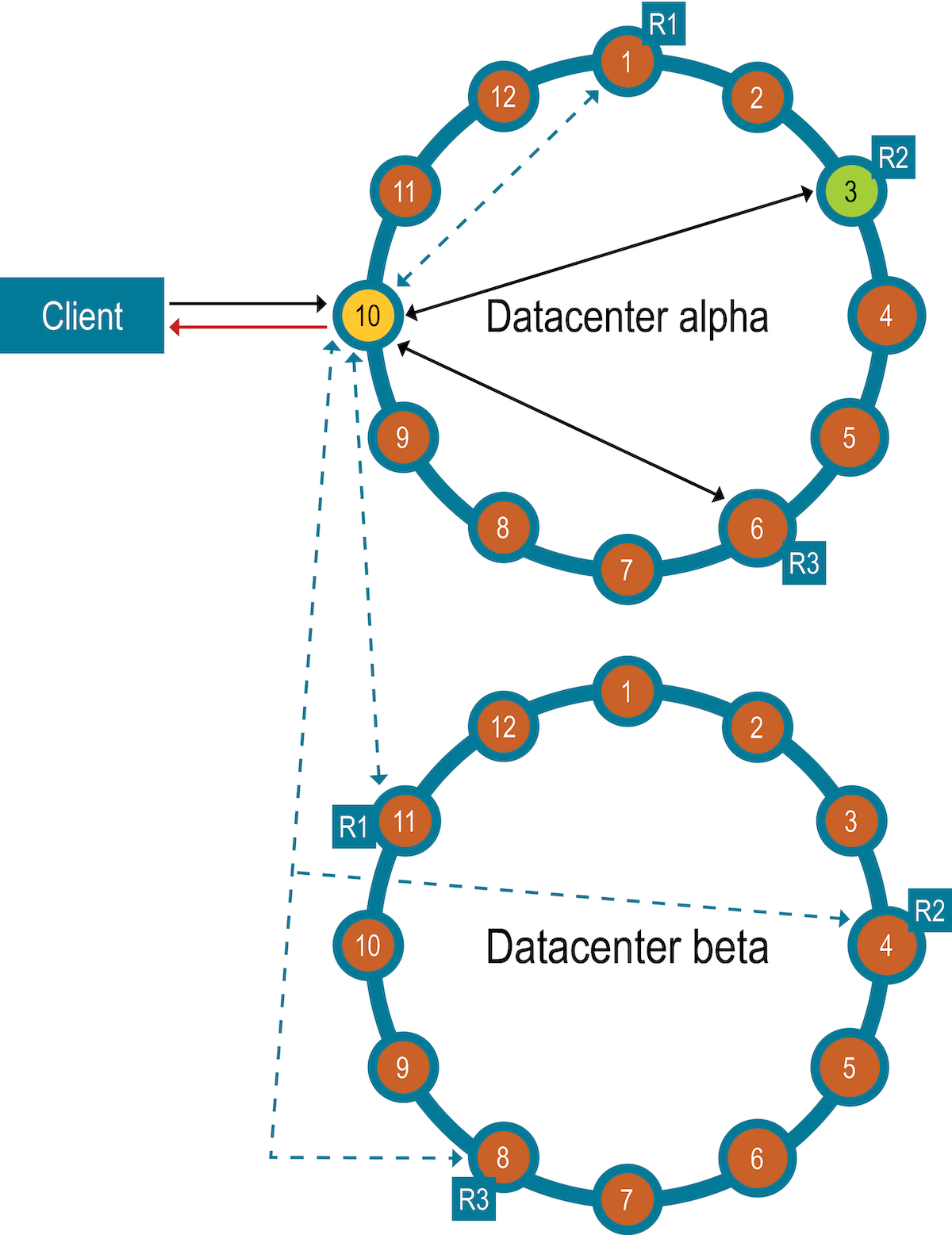

A two-datacenter cluster with a consistency level of QUORUM

In a two-datacenter cluster with a replication factor of 3 and a read consistency of

QUORUM, 4 replicas for the given row must respond to fulfill the read

request. The 4 replicas can be from any datacenter. In the background, the remaining

replicas are checked for consistency with the first four. If needed, a read repair is

initiated for the out-of-date replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read response

Read response

![]() Read repair

Read repair

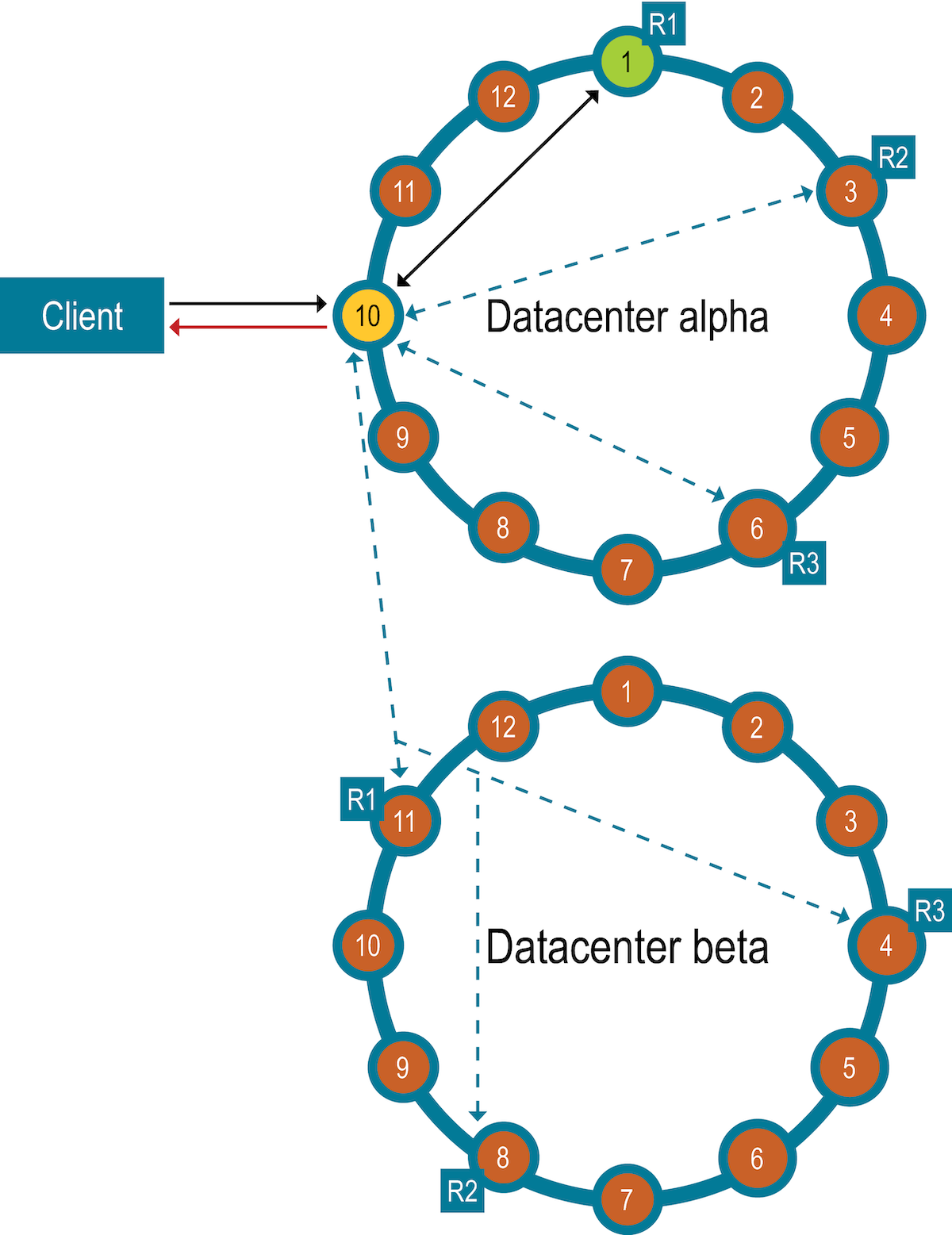

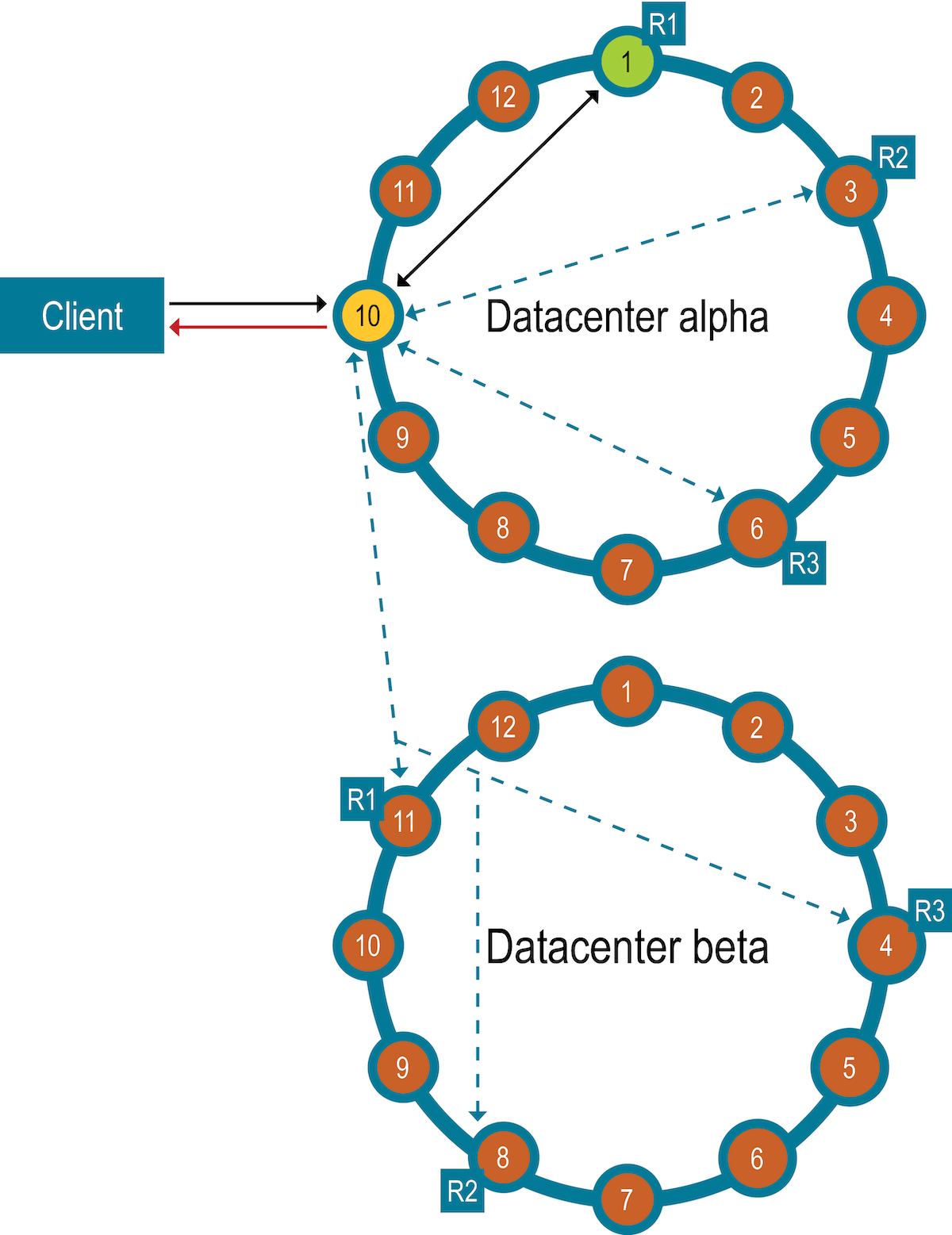

A two-datacenter cluster with a consistency level of LOCAL_QUORUM

In a two-datacenter cluster with a replication factor of 3 and a read consistency of

LOCAL_QUORUM, 2 replicas in the same datacenter as the coordinator node

for the given row must respond to fulfill the read request. In the background, the remaining

replicas are checked for consistency with the first 2. If needed, a read repair is initiated

for the out-of-date replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read

response

Read

response

![]() Read repair

Read repair

A two-datacenter cluster with a consistency level of ONE

In a two-datacenter cluster with a replication factor of 3, and a read consistency of

ONE, the closest replica for the given row, regardless of datacenter, is

contacted to fulfill the read request. Based on the read_repair_chance

setting of the table, a read repair might be initiated in the background for the other

replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read

response

Read

response

![]() Read repair

Read repair

A two-datacenter cluster with a consistency level of LOCAL_ONE

In a two-datacenter cluster with a replication factor of 3, and a read consistency of

LOCAL_ONE, the closest replica for the given row in the same datacenter

as the coordinator node is contacted to fulfill the read request. Based on the

read_repair_chance setting of the table, a read repair might be initiated

in the background for the other replicas.

Coordinator node

Coordinator node

Chosen node

Chosen node

![]() Read

response

Read

response

![]() Read repair

Read repair