Spark introduction

Spark is the default mode when you start an analytics node in a packaged installation.

For those who do not want to use Hadoop, Apache Spark and Apache Shark offer performance improvements over previous versions of DataStax Enterprise Analytics using Hadoop. Spark runs locally on each node and executes in memory when possible. Based on Spark's Resilient Distributed Datasets (RDD), Spark can employ RAM for dataset persistence. Spark stores files for chained iteration in memory as opposed to using temporary storage in HDFS, as Hadoop does. Contrary to Hadoop, Spark utilizes multiple threads instead of multiple processes to achieve parallelism on a single node, avoiding the memory overhead of several JVMs. Spark is the default mode when you start an analytics node in a packaged installation.

About Apache Shark

From a usage perspective, Shark is the counterpart to Hive. Typically, queries run faster in Shark than in Hive. Shark stores metadata in the Cassandra keyspace called HiveMetaStore. External tables are not stored unless explicitly requested. Shark depends on Hive for parsing and for some optimization translations.

Spark architecture

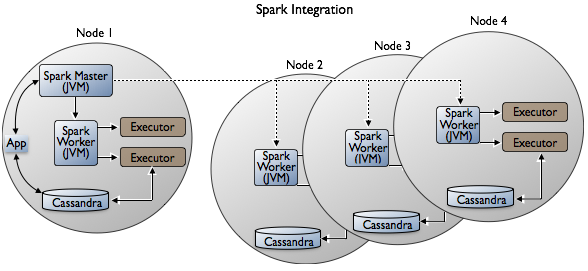

Spark processing resembles Hadoop processing. A Spark Master controls the workflow, and a Spark Worker launches executors responsible for executing part of the job submitted to the Spark master. Spark architecture is slightly more complex than Hadoop architecture, as described in the Apache documentation. Spark supports multiple applications. A single application can spawn multiple jobs and the jobs run in parallel. An application reserves some resources on every node and these resources are not freed until the application finishes. For example, every session of Spark shell or Shark shell is an application that reserves resources. By default, the scheduler tries allocate the application to the highest number of different nodes. For example, if the application declares that it needs four cores and there are ten servers, each offering two cores, the application most likely gets four executors, each on a different node, each consuming a single core. However, the application can get also two executors on two different nodes, each consuming two cores. The user can configure the application scheduler. Contrary to Hadoop trackers, Spark workers / master are spawned as separate processes and are very lightweight. Workers spawn other memory heavy processes that are dedicated to handling queries. Memory settings for those additional processes are fully controlled by the administrator.

In deployment, one analytics node runs the Spark master, and spark workers run on each of the analytics nodes. The Spark Master comes with built-in high availability. Spark executors use native integration to access data in local Cassandra nodes through the Open Source Spark-Cassandra Connector.

Shark uses Hadoop Input/Output formats to access Cassandra data. As you run Spark/Shark, you can access data in the Hadoop Distributed File System (HDFS) or the Cassandra File System (CFS) by using the URL for one or the other.

About the highly available Spark Master

The Spark Master High Availability mechanism uses a special table in dse_system keyspace to store information required to recover Spark workers and the application. Unlike the high availability mechanism mentioned in Spark documentation, DataStax Enterprise does not use ZooKeeper.

If you enable password authentication in Cassandra, DataStax Enterprise creates special users. The Spark Master process accesses Cassandra through the special users, one per Analytics node. The user names begin with the name of the node, followed by an encoded node identifier. The password is randomized.

Do not remove these users or change the passwords because doing so breaks the high availability mechanism.

In DataStax Enterprise 4.5.x, you manage the Spark Master location as you manage the Hadoop Job Tracker. By running a cluster in Spark plus Hadoop mode, the Job Tracker and Spark Master will always work on the same node.

If the original Spark Master fails, the reserved one automatically takes over. You can use the dsetool movejt command to set the reserve Spark Master.

Software components

- Spark Master, active only on one node in DC at a time

- Spark Worker, on all nodes

- Cassandra File System (CFS)

- Cassandra

Unsupported features

- GraphX

- MLLib common machine learning (ML) functionality

- Spark Python API (PySpark)

- Spark Java API

- Spark Streaming

- ODBC/JDBC

Writing to blob columns from Spark is not supported in this release. Reading columns of all types is supported; however, you need to convert collections of blobs to byte arrays before serializing.

Deploying nodes for Spark jobs

Use a virtual data center to isolate Spark jobs. Running Spark jobs consume resources that can affect latency and throughput. To isolate Spark traffic to a subset of dedicated nodes, follow workload isolation guidelines.

DataStax Enterprise 4.5 supports the use of Cassandra virtual nodes (vnodes) with Spark.