How is data read?

To satisfy a read, the DataStax Enterprise (DSE) database must combine results from the active memtable and potentially multiple SSTables. If the memtable has the desired partition data, then the data is read and merged with the data from the SSTables.

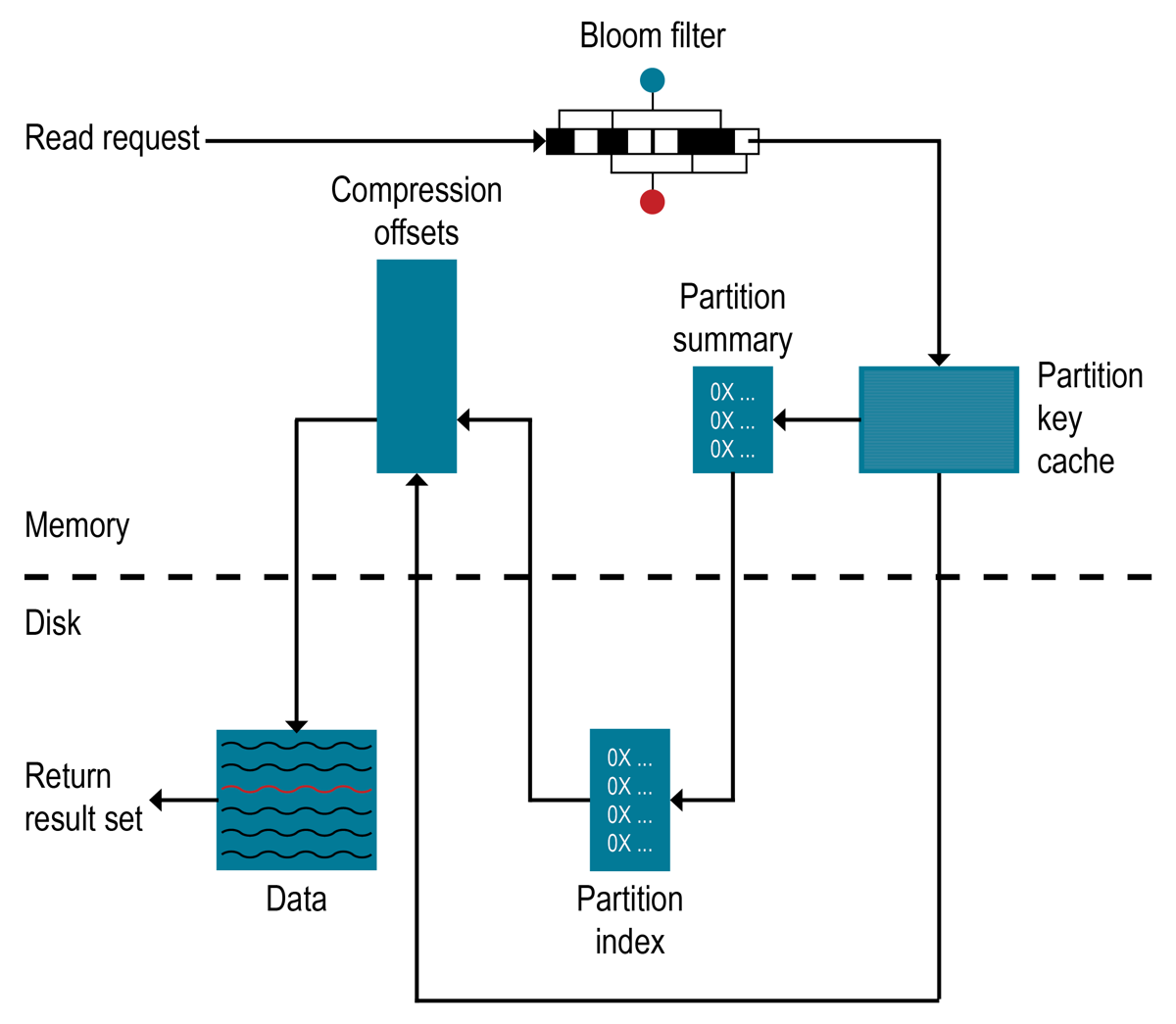

The database processes data at several stages on the read path to discover where the data is stored, starting with the data in the memtable and finishing with SSTables:

-

Check the memtable

-

Check row cache, if enabled

-

Check Bloom filter

-

Check partition key cache, if enabled

-

Go directly to the compression offset map if a partition key is found in the partition key cache. If not, check the partition summary.

If the partition summary is checked, then the partition index is accessed.

-

Locate the data on disk using the compression offset map

-

Fetch the data from the SSTable on disk

Row Cache

Typical of any database, reads are fastest when the most in-demand data fits into memory. The operating system page cache is best at improving performance, although the row cache can provide some improvement for very read-intensive operations, where read operations are 95% of the load. Row cache is not recommended for write-intensive operations. If the row cache is enabled, it stores a subset of the partition data stored on disk in the SSTables in memory. In DataStax Enterprise 5.0 and later, the row cache is stored in fully off-heap memory using an implementation that relieves garbage collection pressure in the Java Virtual Machine (JVM). The subset stored in the row cache uses a configurable amount of memory for a specified period of time. When the cache is full, the row cache uses LRU (least-recently-used) eviction to reclaim memory.

The row cache size is configurable, as is the number of rows to store. Configuring the number of rows to be stored is a useful feature, making a "Last 10 Items" query very fast to read. If row cache is enabled, desired partition data is read from the row cache, potentially saving two seeks to disk for the data. The rows stored in row cache are frequently accessed rows that are merged and saved to the row cache from the SSTables as they are accessed. After storage, the data is available to later queries. The row cache is not write-through. If a write comes in for the row, the cache for that row is invalidated and is not cached again until the row is read. Similarly, if a partition is updated, the entire partition is removed from the cache. When the desired partition data is not found in the row cache, the Bloom filter is checked.

Bloom Filter

Each SSTable has an associated Bloom filter, which can establish that an SSTable does not contain certain partition data. A Bloom filter can also determine the likelihood that partition data is stored in an SSTable by narrowing the pool of keys, which increases partition key lookup.

The DSE database checks the Bloom filter to discover which SSTables are likely to have the requested partition data. If the Bloom filter does not rule out an SSTable, the DSE database checks the partition key cache. Not all SSTables identified by the Bloom filter will have data. Because the Bloom filter is a probabilistic function, it can sometimes return false positives.

The Bloom filter is stored in off-heap memory, and grows to approximately 1-2 gigabytes (GB) per billion partitions. In an extreme case, each row can have a partition, so a single machine can easily have billions of these entries. To trade memory for performance, tune the Bloom filter.

Partition Key Cache

If the partition key is enabled, it stores a cache of the partition index in off-heap memory. The key cache uses a small, configurable amount of memory, and each "hit" saves one seek during the read operation. If a partition key is found in the key cache, the DSE database can go directly to the compression offset map to find the compressed block on disk that has the data. The partition key cache functions better once warmed, and can greatly improve over the performance of cold-start reads, where the key cache doesn’t yet have the keys stored in the key cache. If memory is very limited on a node, it is possible to limit the number of partition keys saved in the key cache. If a partition key is not found in the key cache, then the partition summary is searched.

The partition key cache size and number of keys to store in the cache is configurable.

Partition Summary

The partition summary is an off-heap in-memory structure that stores a sampling of the partition index. A partition index contains all partition keys, whereas a partition summary samples every X keys, and maps their location in the index file. For example, if the partition summary is set to sample every 20 keys, it stores the location of the first key as the beginning of the SSTable file, the 20th key and its location in the file, and so on. While not as exact as knowing the location of the partition key, the partition summary can shorten the scan to find the partition data location. After finding the range of possible partition key values, the partition index is searched.

By configuring the sample frequency, you can trade memory for performance.

The more granularity the partition summary has, the more memory it will use.

The sample frequency is changed using the min_index_interval and max_index_interval properties in the table definition.

A fixed amount of memory is configurable using the index_summary_capacity_in_mb property, and defaults to 5% of the heap size.

Partition Index

The partition index resides on disk and stores an index of all partition keys mapped to their offset. After the partition summary is checked for a range of partition keys, the search seeks the location of the desired partition key in the partition index. A single seek and sequential read of the columns over the passed-in range is performed. Using the information found, the partition index seeks the compression offset map to find the compressed block on disk that has the data.

Compression offset map

The compression offset map stores pointers to the exact location on disk where the desired partition data will be found. This location is stored in off-heap memory and is accessed by either the partition key cache or the partition index. After the compression offset map identifies the disk location, the desired compressed partition data is fetched from the correct SSTable(s). The query then receives the result set.

|

Within a partition, all rows are not equally expensive to query. The very beginning of the partition (the first rows, clustered by your key definition) is slightly less expensive to query because there is no need to consult the partition-level index. |

The compression offset map grows to 1-3 gigabytes (GB) per terabyte (TB) compressed. The more data is compressed, the greater number of compressed blocks required, and the larger the compression offset table. Compression is enabled by default even though going through the compression offset map consumes CPU resources. Having compression enabled makes the page cache more effective, and typically results in a timely search.