Mapping basic messages to table columns

Create a topic-table map for Kafka messages that only contain a key and value in each record.

When messages are created using a Basic or primitive serializer, the message contains a key-value pair. Map the key and value to table columns. Ensure that the data types of the message field are compatible with the data type of the target column.

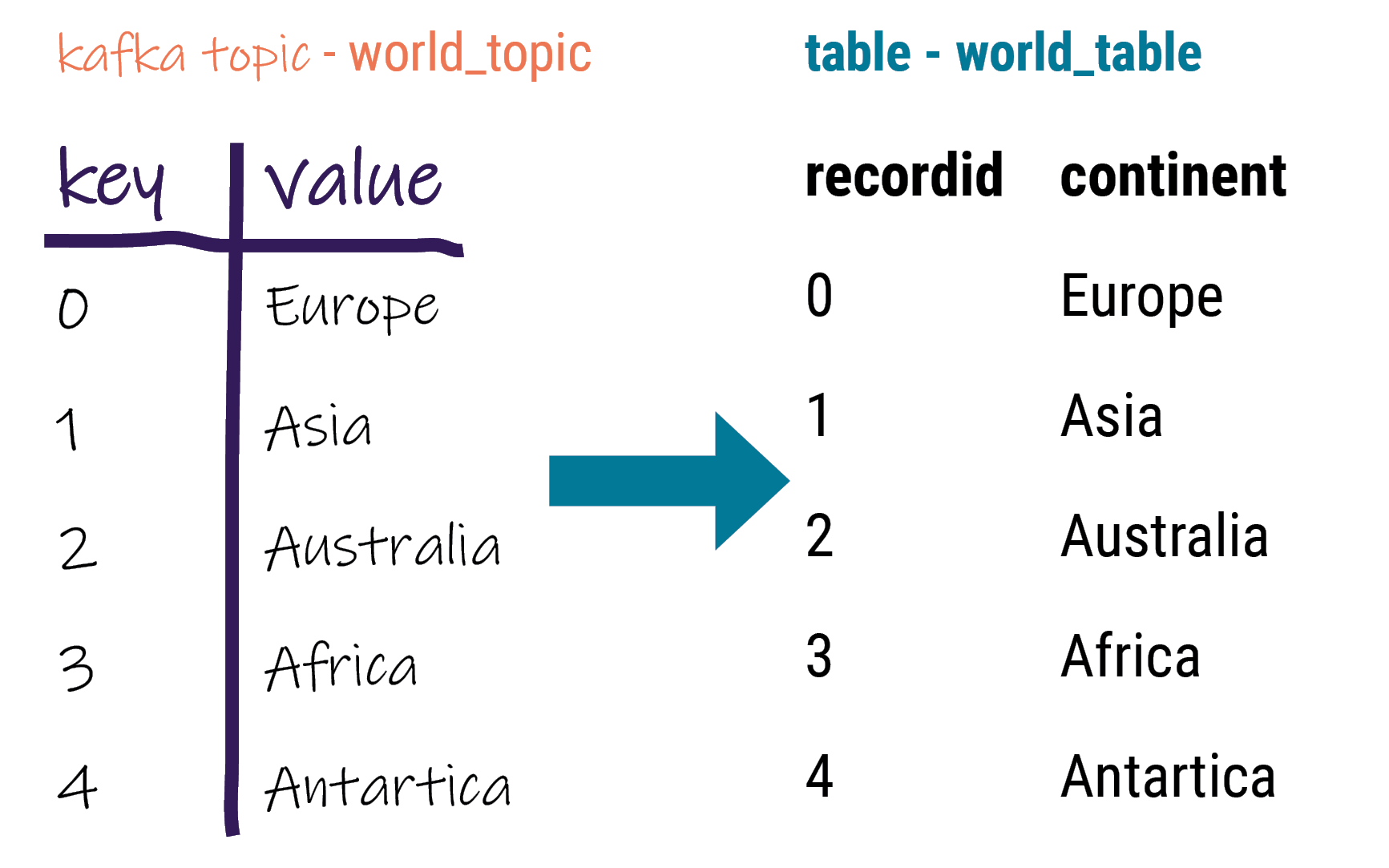

For example, the Kafka world topic (world_topic) contains 5 records with a

key-value pair in each. The key is an integer and the value is text.

Table requirements

Ensure the following when mapping fields to columns:

- Data in the Kafka field is compatible with the database table column data type.

- Kafka field mapped to a database primary key (PK) column always contains data. Null values are not allowed in PK columns.

Procedure

-

Verify that the correct converter is set in the key.converter and value.converter of the

connect-distributed.propertiesfile. -

Set up the supported database table.

-

In the DataStax Apache Kafka Connector configuration file:

- Add the topic name to topics.

- Define the topic-to-table map prefix.

- Define the field-to-column map.

Example configurations forworld_topictoworld_tableusing the minimum required settings:Note: See DataStax Apache Kafka Connector configuration parameter reference for additional parameters. When the contactPoints parameter is missing, thelocalhost; this assumes the database is co-located on the DataStax Apache Kafka Connector node.- JSON for distributed

mode:

{ "name": "world-sink", "config": { "connector.class": "com.datastax.kafkaconnector.DseSinkConnector", "tasks.max": "1", "topics": "world_topic", "topic.world_topic.world_keyspace.world_table.mapping”: “recordid=key, continent=value" } } - Properties file for standalone

mode:

name=world-sink connector.class=com.datastax.kafkaconnector.DseSinkConnector tasks.max=1 topics=world_topic topic.world_topic.world_keyspace.world_table.mapping = recordid=key, continent=value

- Update configuration on a running worker or deploy the DataStax Connector for the first time.