Mixing workloads in a cluster

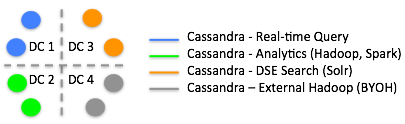

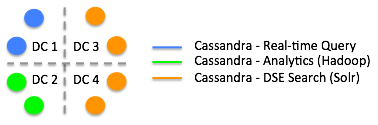

Organize nodes that run different workloads into virtual data centers. Put analytic nodes in one data center, search nodes in another, and Cassandra real-time transactional nodes in another data center.

- Real-time Cassandra,

- DSE Hadoop, which is integrated Hadoop

- External Hadoop in the bring your own Hadoop (BYOH) model

- Spark

- DSE Search/Solr nodes

The answer is to organize the nodes running different workloads into virtual data centers: analytics workloads (either DSE Hadoop, Spark, or BYOH) nodes in one data center, search nodes in another, and Cassandra real-time nodes in another data center. DataStax supports a data center that contains one or more nodes running in dual Spark/DSE Hadoop mode. Dual Spark/DSE Hadoop mode means you started the node using the -k and -t options on tarball or Installer-No Services installations, or set the startup options HADOOP_ENABLED=1 and SPARK_ENABLED=1 on package or Installer-Services installations.

Spark workloads

Spark does not absolutely require a separate data center or work load isolation from real-time and analytics workloads, but if you expect Spark jobs to be very resource intensive, use a dedicated data center for Spark. Spark jobs consume resources that can affect the latency and throughput of Cassandra jobs or Hadoop jobs. When you run a node in Spark mode, Cassandra runs an Analytics workload.

BYOH workloads

BYOH nodes need to be isolated from Cloudera or Hortonworks masters.

Solr workloads

The batch needs of Hadoop and the interactive needs of Solr are incompatible from a performance perspective, so these workloads need to be segregated.

Cassandra workloads

- A Cassandra real-time application needs very rapid access to Cassandra

data.

The real-time application accesses data directly by key, large sequential blocks, or sequential slices.

- A DSE Search/Solr application needs a broadcast or scatter model to perform

full-index searching.

Virtually every Solr search needs to hit a large percentage of the nodes in the virtual data center (depending on the RF setting) to access data in the entire Cassandra table. The data from a small number of rows are returned at a time.

Creating a virtual data center

When you create a keyspace using CQL, Cassandra creates a virtual data center for a cluster, even a one-node cluster, automatically. You assign nodes that run the same type of workload to the same data center. The separate, virtual data centers for different types of nodes segregate workloads running Solr from those running other workload types. Segregating workloads ensures that only one type of workload is active per data center.

Workload segregation

- Real-time queries (Cassandra and no other services)

- Analytics (either DSE Hadoop, Spark, or dual mode DSE Hadoop/Spark)

- Solr

- External Hadoop system (BYOH)

In a cluster having BYOH and DSE integrated Hadoop nodes, the DSE integrated Hadoop nodes would have priority with regard to start up. Start up seed nodes in the BYOH data center after starting up DSE integrated Hadoop data centers.

Occasionally, there is a use case for keeping DSE Hadoop and Cassandra nodes in the same data center. You do not have to have one or more additional replication factors when these nodes are in the same data center.

To deploy a mixed workload cluster, see "Multiple data center deployment."

- Real-time queries (Cassandra and no other services)

- Analytics (Cassandra and integrated Hadoop)

This diagram shows DSE Hadoop analytics, Cassandra, and Solr nodes in separate data centers. In separate data centers, some DSE nodes handle search while others handle MapReduce, or just act as real-time Cassandra nodes. Cassandra ingests the data, Solr indexes the data, and you run MapReduce against that data in one cluster without performing manual extract, transform, and load (ETL) operations. Cassandra handles the replication and isolation of resources. The Solr nodes run HTTP and hold the indexes for the Cassandra table data. If a Solr node goes down, the commit log replays the Cassandra inserts, which correspond to Solr inserts, and the node is restored automatically.

Restrictions

- Do not create the keyspace using SimpleStrategy for production use or for use with mixed workloads.

- From DSE Hadoop and Cassandra real-time clusters in multiple data centers, do not attempt to insert data to be indexed by Solr using CQL or Thrift.

- Within the same data center, do not run Solr workloads on some nodes and other types of workloads on other nodes.

- Do not run Solr and DSE Hadoop on the same node in either production or development environments.

- Do not run some nodes in DSE Hadoop mode and some in Spark mode in the same data

center.

You can run all the nodes in Spark mode, all the nodes in Hadoop mode or all the nodes in Spark/DSE Hadoop mode.

Recommendations

Run the CQL or Thrift inserts on a Solr node in its own data center.

NetworkTopologyStrategy is highly recommended for most deployments because it is much easier to expand to multiple data centers when required by future expansion.

Getting cluster workload information

- Analytics

- Cassandra

- Search

SELECT workload FROM system.local;

The output looks something like this:

workload ---------- Analytics

DESCRIBE FULL schemaThe output shows the system and other table schemas. For example, the peers table schema is:

CREATE TABLE peers ( peer inet, data_center text, host_id uuid, preferred_ip inet, rack text, release_version text, rpc_address inet, schema_version uuid, tokens set<text>, workload text, PRIMARY KEY ((peer)) ) WITH . . .;

Replicating data across data centers

You set up replication by creating a keyspace. You can change the replication of a keyspace after creating it.