A deployment scenario with a mixed workload cluster has only one data center for each

type of workload.

In this scenario, a mixed workload cluster has only one data center for each type of

node. For example, if the cluster has 3 Hadoop nodes, 3 Cassandra nodes, and 2 Solr

nodes, the cluster has 3 data centers, one for each type of node. In contrast, a

multiple data center cluster has more than one data center for each type of

node.

In single data center deployments, data replicates across the data centers

automatically and transparently. No ETL work is necessary to move data between

different systems or servers. You can configure the number of copies of the data in each

data center and Cassandra handles the rest, replicating the data for you.

To configure a multiple data center cluster, see Multiple data center deployment.

Prerequisites

- DataStax Enterprise is installed on each node.

- Choose a name for the cluster.

- For a mixed-workload cluster, determine the purpose of

each node.

- Get the IP address of each node.

- Determine which nodes are seed nodes. (Seed nodes provide

the means for all the nodes to find each other and learn the topology of the

ring.)

- Other possible configuration settings are described in

the cassandra.yaml configuration

file.

- Set virtual nodes correctly for the type of data

center. DataStax recommends using virtual nodes only on data centers running

Cassandra real-time workloads. See Virtual nodes.

Procedure

This configuration example describes installing a 6 node cluster spanning 2 racks

in a single data center. The default consistency level is QUORUM.

-

Suppose the nodes have the following IPs and one node per rack will serve as a

seed:

- node0 110.82.155.0 (Cassandra seed)

- node1 110.82.155.1 (Cassandra)

- node2 110.82.155.2 (Cassandra)

- node3 110.82.155.3 (Analytics seed)

- node4 110.82.155.4 (Analytics)

- node5 110.82.155.5 (Analytics)

- node6 110.82.155.6 (Search - seed nodes are not required for Solr.)

- node7 110.82.155.7 (Search)

-

If the nodes are behind a firewall, open the required ports for

internal/external communication. See Configuring

firewall port access.

-

If DataStax Enterprise is running, stop the nodes and clear the data:

- Packaged

installs:

$ sudo service dse stop

$ sudo rm -rf /var/lib/cassandra/* ## Clears the data from the default directories

- Tarball installs:

From the install

directory:

$ sudo bin/dse cassandra-stop

$ sudo rm -rf /var/lib/cassandra/* ## Clears the data from the default directories

-

Set the properties in the cassandra.yaml file for each

node.

Important: After making any changes

in the cassandra.yaml file, you must restart the node

for the changes to take effect.

Location:

- Packaged installs:

/etc/dse/cassandra/cassandra.yaml

- Tarball installs:

install_location/resources/cassandra/conf/cassandra.yaml

Properties to set:

Note: If the nodes in the cluster are identical in

terms of disk layout, shared libraries, and so on, you can use the same copy

of the cassandra.yaml file on all of them.

- num_tokens: 256 for Cassandra nodes

- num_tokens: 1 for Hadoop and Solr nodes

- -seeds: internal_IP_address of

each seed node

- listen_address: empty

If not

set, Cassandra asks the system for the local address, the one associated

with its hostname. In some cases Cassandra doesn't produce the correct

address and you must specify the listen_address.

- auto_bootstrap: false (Add this

setting only when initializing a fresh cluster with no data.)

- If you are using a cassandra.yaml from a previous

version, remove the following options, as they are no longer supported by

DataStax

Enterprise:

## Replication strategy to use for the auth keyspace.

auth_replication_strategy: org.apache.cassandra.locator.SimpleStrategy

auth_replication_options:

replication_factor: 1

Example:

cluster_name: 'MyDemoCluster'

num_tokens: 256

seed_provider:

- class_name: org.apache.cassandra.locator.SimpleSeedProvider

parameters:

- seeds: "110.82.155.0,110.82.155.3"

listen_address:

-

After you have installed and configured DataStax Enterprise on all nodes, start

the seed nodes one at a time, and then start the rest of the nodes:

Note: If the node has restarted because of automatic restart, you must stop the

node and clear the data directories, as described above.

-

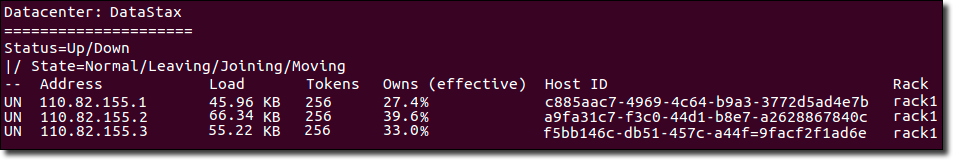

Check that your cluster is up and running:

- Packaged installs: $ nodetool status

- Tarball installs:

$

install_location/bin/nodetool

status