Batch operations

Batching is used to insert or update data in tables. Understanding the use of batching to avoid negative performance impacts.

Atomicity of batch operations

Batch operations (inserts, updates, or deletes) for both single partition and multiple partitions ensure atomicity.

An atomic transaction is an indivisible and irreducible series of operations that are either all executed, or none are executed. Single partition batch operations are atomic automatically, while multiple partition batch operations require the use of a batchlog to ensure atomicity.

Use batching if atomicity is a primary concern for a group of operations.

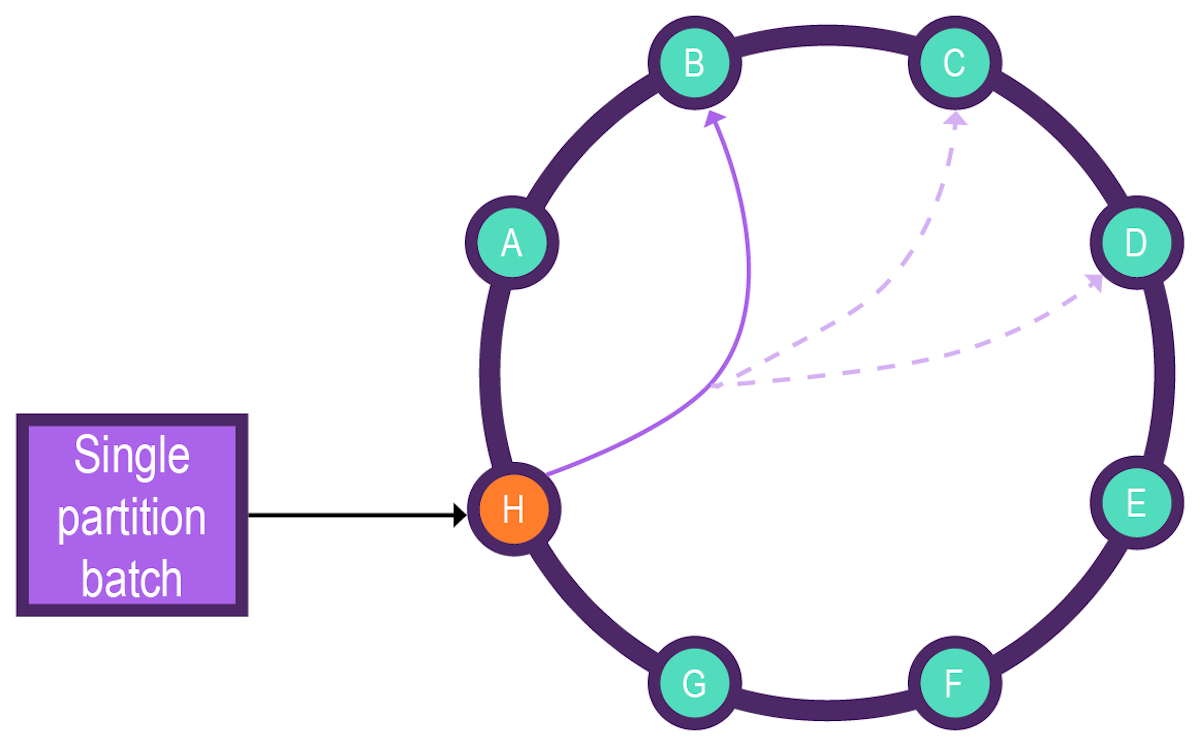

- Single partition batch

-

Single partition batch operations are processed on the server side as a single mutation for improved performance, provided the number of operations do not exceed the maximum size of a single operation or cause the query to time out.

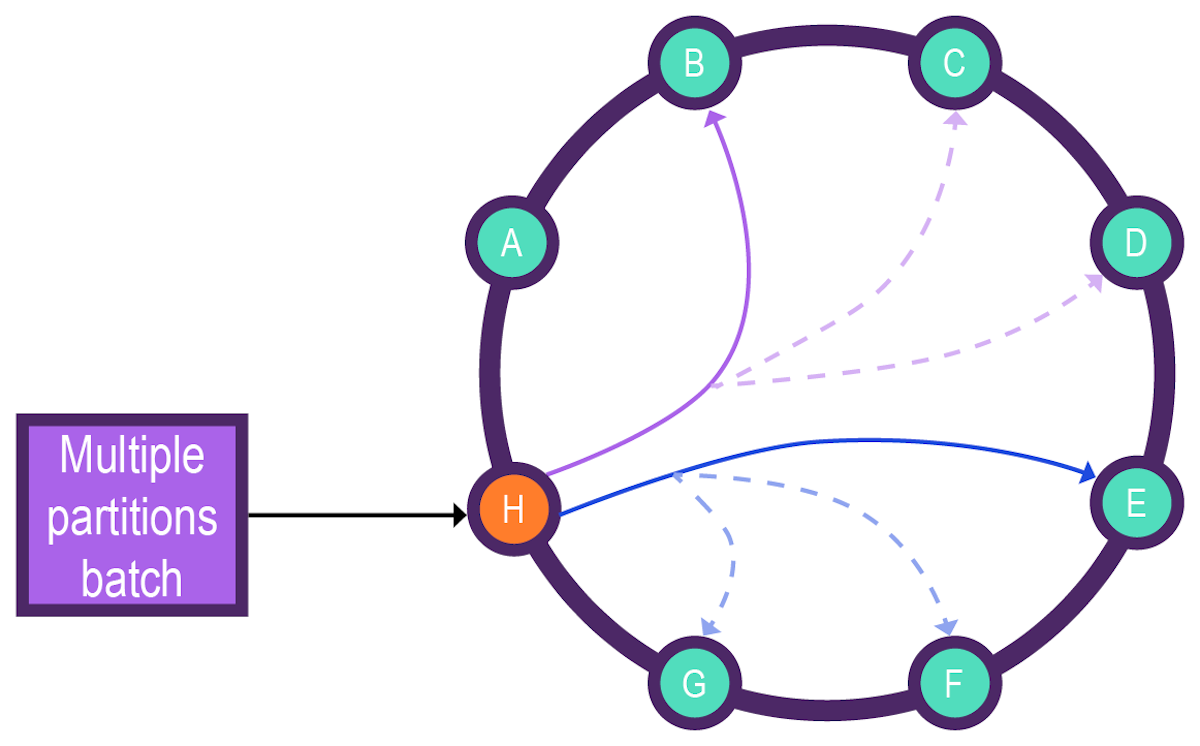

- Multiple partition batch

-

Multiple partition batch operations often have performance issues and should be used only if atomicity must be ensured.

A cassandra.yaml option lets you choose the` batchlog_endpoint_strategy`.

Batching can hurt performance if used ineffectively

Batching can be effective for single partition write operations. But batches are often mistakenly used in an attempt to optimize performance. Depending on the batch operation, the performance may actually worsen. Some batch operations place a greater burden on the coordinator node and lessen the efficiency of the data insertion.

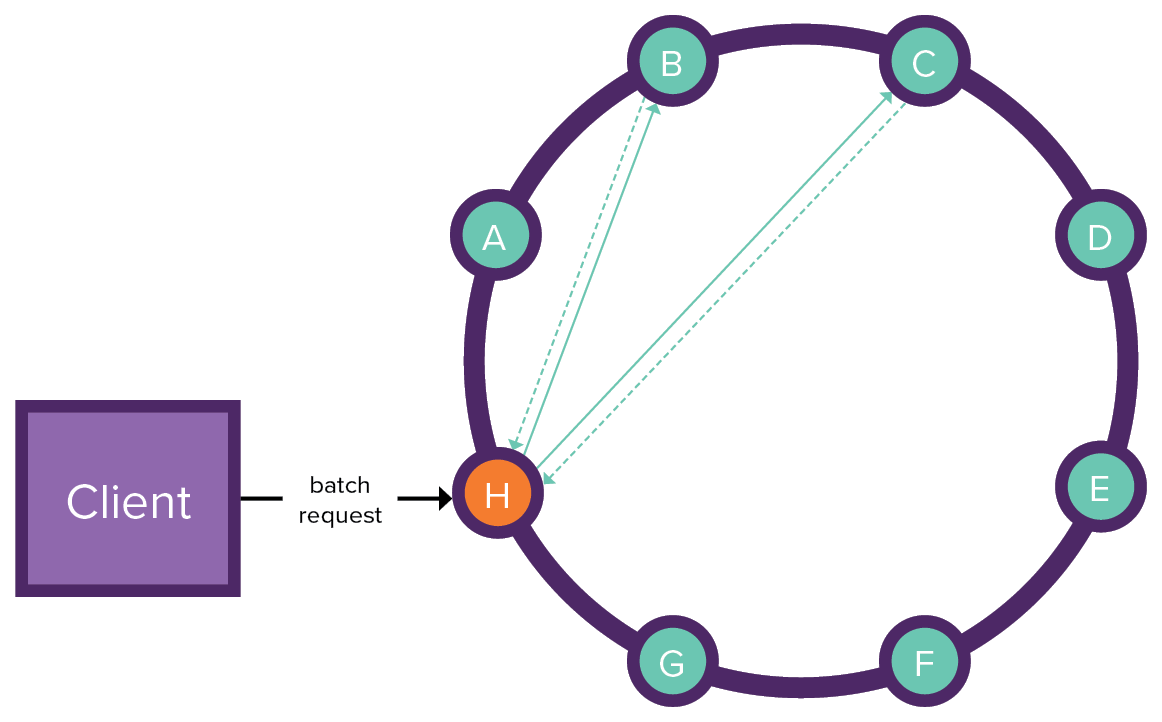

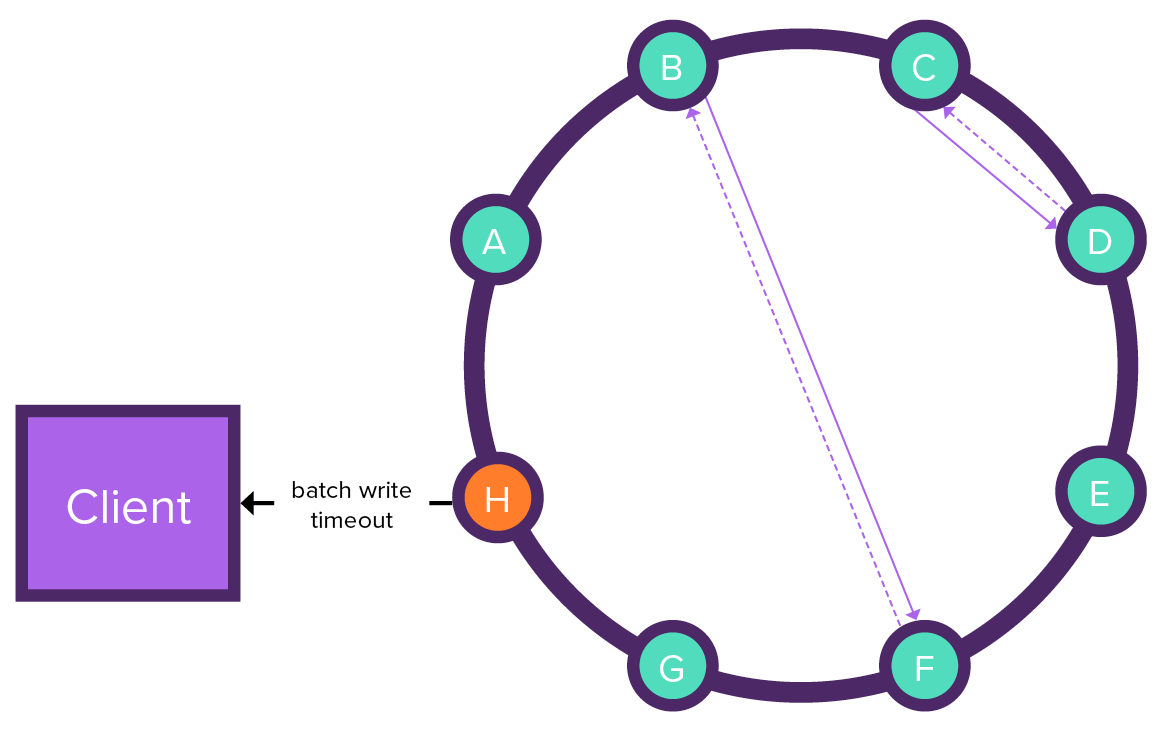

Client sends a batch request to the coordinator node, which sends two copies of the batchlog to the batchlog nodes. The batchlog nodes confirm receipt of the batchlog.

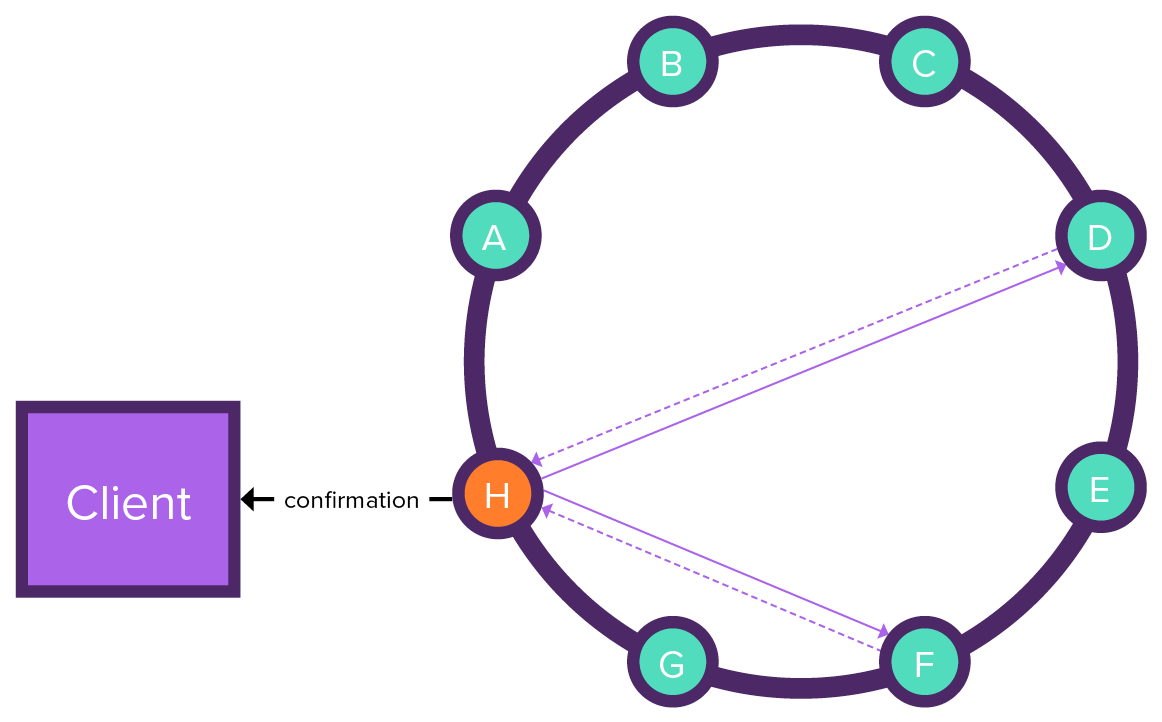

The coordinator node executes the batch statements on the nodes. The receiving nodes return the execution results to the coordinator node. The coordinator node removes the batchlog from the batchlog nodes and sends confirmation to the client.

When coordinator node fails during batch execution, the batchlog node tries to execute the batch and the coordinator node sends a batch write timeout to the client. The batchlog nodes continue to try to execute statements until results are received from each receiving node. Once all of the results are received, the batchlog is removed from the batchlog nodes.

The number of partitions involved in a batch operation, and therefore the potential for multi-node access, can significantly increase latency. In all batching, the coordinator node manages all write operations, so that the coordinator node can be a bottleneck.

When to use batching

-

Inserts, updates, or deletes to a single partition when atomicity and isolation is a requirement. Atomicity ensures that either all or nothing is written. Isolation ensures that partial insertion or updates are not accessed until all operations are complete.

Single partition batching sends one message to the coordinator for all operations. All replicas for the single partition receive the data, and the coordinator waits for acknowledgement. No batchlog mechanism is necessary. The number of nodes involved in the batch is bounded by the number of replicas.

-

Ensuring atomicity for small inserts or updates to multiple partitions when data inconsistency must not occur.

Multiple partition batching sends one message to the coordinator for all operations. The coordinator writes a batchlog that is replicated to other nodes to ensure that inconsistency will not occur if the coordinator fails. Then the coordinator must wait for all nodes with an affected partition to acknowledge the operations before removing the logged batch. The number of nodes involved in the batch is bounded by number of distinct partition keys in the logged batch plus (possibly) the batchlog replica nodes. While a batch operation for a small number of partitions may be critical for consistency, this use case is more the exception than the rule.

Example of a single partition BATCH statement

The following example shows an optimal, beneficial use of a single partition BATCH statement.

The examples use the table cyclist_expenses:

CREATE TABLE IF NOT EXISTS cycling.cyclist_expenses (

cyclist_name text,

balance float STATIC,

expense_id int,

amount float,

description text,

paid boolean,

PRIMARY KEY (cyclist_name, expense_id)

);Note that balance is STATIC.

|

If there are two different tables in the same keyspace and the two tables have the same partition key, this scenario is considered a single partition batch. There will be a single mutation for each table. This happens because the two tables could have different columns, even though the keyspace and partition are the same. Batches allow a caller to bundle multiple operations into a single batch request. All the operations are performed by the same coordinator. The best use of a batch request is for a single partition in multiple tables in the same keyspace. Also, batches provide a guarantee that mutations will be applied in a particular order. |

The table cyclist_expenses stores records about each purchase by a cyclist and includes the running balance of all the cyclist’s purchases.

Because the balance is static, all purchase records for a cyclist have the same running balance, and you can only provide the partition key when updating the data.

To update a non-static column, you would also have to provide a clustering key.

Using batched conditional updates, you can maintain a running balance. If the balance were stored in a separate table, maintaining a running balance wouldn’t be possible because a batch having conditional updates cannot span multiple partitions.

All the INSERT and UPDATE statements in this batch write to the same partition, keeping the latency of the write operation low.

BEGIN BATCH

INSERT INTO cycling.cyclist_expenses (

cyclist_name, balance

) VALUES (

'Vera ADRIAN', 0

) IF NOT EXISTS;

INSERT INTO cycling.cyclist_expenses (

cyclist_name, expense_id, amount, description, paid

) VALUES (

'Vera ADRIAN', 1, 7.95, 'Breakfast', false

);

APPLY BATCH;This batching example includes conditional updates combined with using static columns. Recall that single partition batches are not logged.

|

It would be reasonable to expect that an UPDATE to the balance could be included in this BATCH statement: However, it is important to understand that all the statements processed in a |

An acknowledgement of a batch statement is returned if the batch operation is successful:

[applied]

-----------

TrueThe resulting table will only have one record so far:

cyclist_name | expense_id | balance | amount | description | paid

--------------+------------+---------+--------+-------------+-------

Vera ADRIAN | 1 | 0 | 7.95 | Breakfast | False

(1 rows)The balance can be adjusted separately with an UPDATE statement:

UPDATE cycling.cyclist_expenses SET balance = -7.95

WHERE cyclist_name = 'Vera ADRIAN' IF balance = 0;Now the balance will reflect that breakfast was unpaid:

cyclist_name | expense_id | balance | amount | description | paid

--------------+------------+---------+--------+-------------+-------

Vera ADRIAN | 1 | -7.95 | 7.95 | Breakfast | False

(1 rows)Example of a multiple partition logged batch

Another example is using BATCH to perform a multiple partition insert that involves writing the same data to two related tables that must be synchronized.

The following example modifies multiple partitions, which in general is to be avoided, but the batch only contains two statements:

BEGIN BATCH

INSERT INTO cycling.cyclist_name (

id, lastname, firstname

) VALUES (

6ab09bec-e68e-48d9-a5f8-97e6fb4c9b12, 'SMITH', 'John'

);

INSERT INTO cycling.cyclist_expenses (

cyclist_name, expense_id, amount, description, paid

) VALUES (

'John SMITH', 3, 15.00, 'Lunch', false

);

APPLY BATCH;Another common use for this type of batch operation is updating usernames and passwords.

When to avoid batching

Batched statements can save network round-trips between the client and the server, and possibly between the coordinator and the replicas. However, consider if batch operations are truly necessary.

For example, inserting or updating data to multiple partitions, especially when a large number of partitions are involved, is an inefficient use of batching.

As previously stated, batching to multiple partitions has performance costs. Unlogged batch operations are possible, to avoid the additional time cost of the batchlog, but the coordinator node will be a bottleneck because of synchronization. A better alternative uses asynchronous writes using driver code; the token aware loading balancing will distribute the writes to several coordinator nodes, decreasing the time to complete the insert and update operations.

Example of a misused BATCH statement

Batch operations that involve multiple partitions are an anti-pattern.

Since different partitions can be stored on different nodes, writing to multiple partitions can significantly increase the number of nodes involved in an operation.

Coordinator nodes must wait for nodes to acknowledge a request, with the minimum number of nodes determined by the consistency level.

Stricter consistency levels, such as ALL, can increase latency, particularly when multi-partition writes increase the number of nodes handling a request.

The following example shows an inefficient BATCH statement that writes to four different partitions, given the partition key id:

BEGIN BATCH

INSERT INTO cycling.cyclist_name (

id, lastname, firstname

) VALUES (

6d5f1663-89c0-45fc-8cfd-60a373b01622,'HOSKINS', 'Melissa'

);

INSERT INTO cycling.cyclist_name (

id, lastname, firstname

) VALUES (

38ab64b6-26cc-4de9-ab28-c257cf011659,'FERNANDES', 'Marcia'

);

INSERT INTO cycling.cyclist_name (

id, lastname, firstname

) VALUES (

9011d3be-d35c-4a8d-83f7-a3c543789ee7,'NIEWIADOMA', 'Katarzyna'

);

INSERT INTO cycling.cyclist_name (

id, lastname, firstname

) VALUES (

95addc4c-459e-4ed7-b4b5-472f19a67995,'ADRIAN', 'Vera'

);

APPLY BATCH;Because this statement writes to four partitions, the number of nodes required to complete the request can be up to four times the write consistency level.

Similarly, a batch write to 100 partitions scales to 100 times the write consistency level

Max possible nodes = Partitions in BATCH statement x Consistency levelKnowing how data is partitioned is crucial for understanding the performance implications of BATCH operations.