Prepare the ZDM Proxy deployment infrastructure

After confirming that your clusters, data model, and application logic meet the compatibility requirements for ZDM Proxy, you must prepare the infrastructure to deploy your ZDM Proxy instances.

DataStax recommends using ZDM Proxy Automation and ZDM Utility to manage your ZDM Proxy deployment. If you choose to use these tools, you must also prepare infrastructure for ZDM Proxy Automation.

Choose where to deploy the proxy

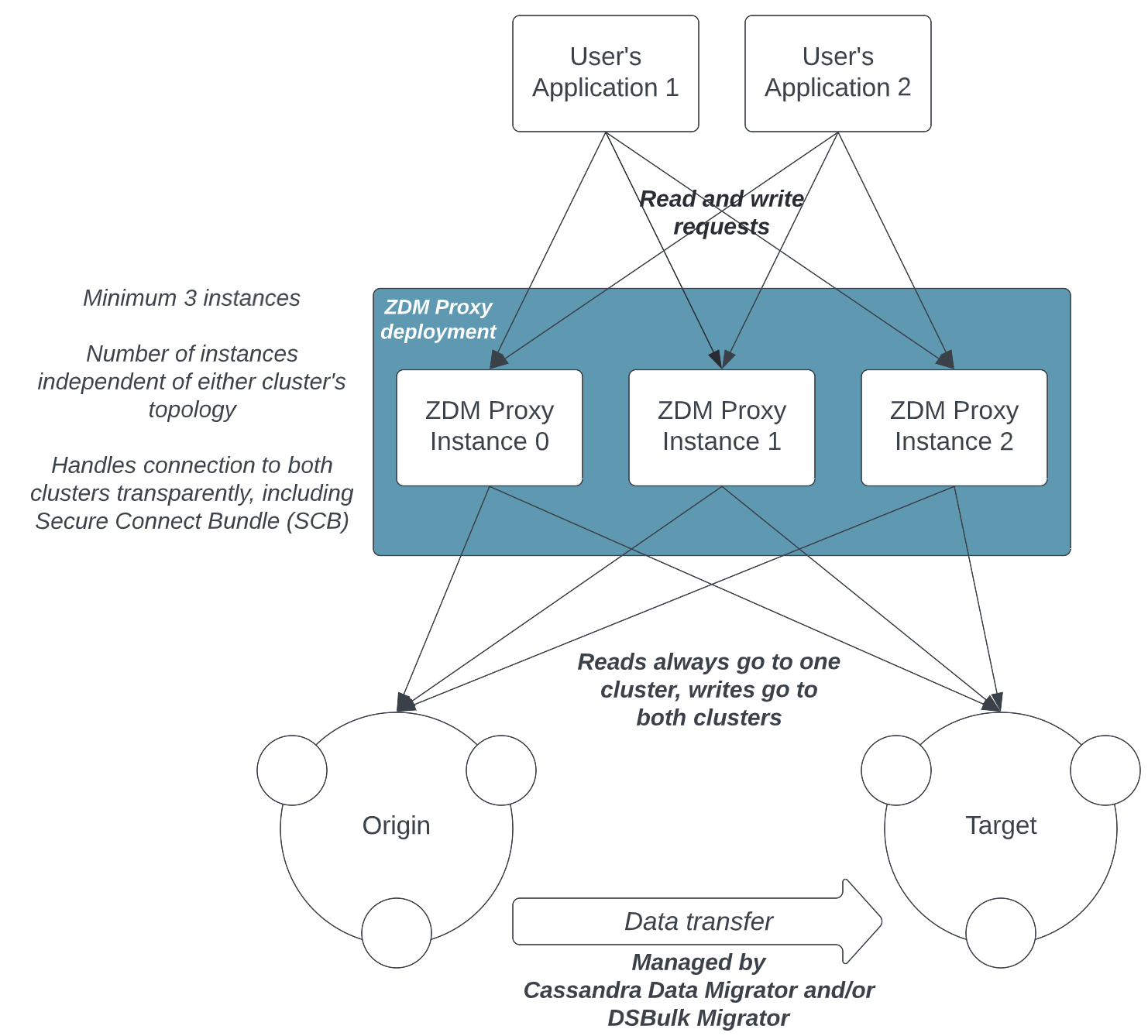

A typical ZDM Proxy deployment is made up of multiple proxy instances. DataStax recommends a minimum of three proxy instances for any deployment other than local test or demo environments.

All ZDM Proxy instances must be reachable by your client application, and they must be able to connect to your origin and target clusters. DataStax recommends that you deploy ZDM Proxy as close to your client application instances as possible. For more information, see Connectivity requirements.

You can deploy ZDM Proxy on any cloud provider or on-premises, depending on your existing infrastructure.

The following diagram shows a typical deployment with connectivity between client applications, ZDM Proxy instances, and clusters:

The ZDM Proxy process is lightweight, requiring only a small amount of resources and no storage to persist its state aside from logs.

Multiple datacenter clusters

If you have a multi-datacenter cluster with multiple set of client application instances deployed to geographically distributed datacenters, you must plan a separate ZDM Proxy deployment for each datacenter.

In the configuration for each ZDM Proxy deployment, specify only the contact points that belong to that datacenter, and set the origin_local_datacenter and target_local_datacenter properties as needed.

If your origin and target clusters are both multi-datacenter clusters, this configuration will be more complicated to correctly orchestrate traffic routing through ZDM Proxy. DataStax recommends contacting IBM Support for assistance with complex multi-region and multi-datacenter migrations.

Don’t deploy ZDM Proxy as a sidecar

Don’t deploy ZDM Proxy as a sidecar because it was designed to mimic communication with a Cassandra-based cluster. For this reason, DataStax recommends deploying multiple ZDM Proxy instances, each running on a dedicated machine, instance, or VM.

For best performance, deploy your ZDM Proxy instances as close as possible to your client applications, ideally on the same local network, but don’t co-deploy them on the same machines as the client applications. This way, each client application instance can connect to all ZDM Proxy instances, just as it would connect to all nodes in a Cassandra-based cluster or datacenter.

This deployment model provides maximum resilience and failure tolerance guarantees, and it allows the client application driver to continue using the same load balancing and retry mechanisms that it would normally use.

Conversely, deploying a single ZDM Proxy instance undermines this resilience mechanism and creates a single point of failure, which can affect client applications if one or more nodes of the underlying origin or target clusters go offline. In a sidecar deployment, each client application instance would be connecting to a single ZDM Proxy instance, and would, therefore, be exposed to this risk.

Hardware requirements

You need a number of machines to run your ZDM Proxy instances, plus additional machines for the centralized jumphost, and for running DataStax Bulk Loader (DSBulk) or Cassandra Data Migrator (CDM) (which are recommended data migration and validation tools).

This section uses the term machine broadly to refer to a cloud instance (on any cloud provider), a VM, or a physical server.

- ZDM Proxy instances

-

You need one machine for each ZDM Proxy instance. DataStax recommends a minimum of three proxy instances for all migrations that aren’t for local testing purposes.

Each machine must meet the following specifications:

-

Ubuntu Linux 20.04 or 22.04, Red Hat Family Linux 7 or newer

-

4 vCPUs

-

8 GB RAM

-

20 to 100 GB of storage for the root volume

-

Equivalent to AWS c5.xlarge, GCP e2-standard-4, or Azure A4 v2

These machines can be on any cloud provider or on-premises, depending on your existing infrastructure, and they should be as close as possible to your client application instances for best performance.

-

- Jumphost and monitoring

-

You need at least one machine for the jumphost, which is the centralized manager for the ZDM Proxy instances and runs the monitoring stack (Prometheus and Grafana). With ZDM Proxy Automation, the jumphost also runs the Ansible Control Host container.

Typically, a single machine is used for all of these functions, but you can split them across different machines if you prefer. This documentation assumes you are using a single machine.

The jumphost machine must meet the following specifications:

-

Ubuntu Linux 20.04 or 22.04, Red Hat Family Linux 7 or newer

-

8 vCPUs

-

16 GB RAM

-

200 to 500 GB of storage depending on the amount of metrics history that you want to retain

-

Equivalent to AWS c5.2xlarge, GCP e2-standard-8, or Azure A8 v2

-

- Data migration tools (DSBulk or CDM)

-

You need at least one machine to run DSBulk or CDM for the data migration and validation (Phase 2). Even if you plan to use another data migration tool, you might need infrastructure for these tools or your chosen tool. For example, CDM is used for data validation after migrating data with Astra DB Sideloader.

DataStax recommends that you start with at least one VM that meets the following minimum specifications:

-

Ubuntu Linux 20.04 or 22.04, Red Hat Family Linux 7 or newer

-

16 vCPUs

-

64 GB RAM

-

200 GB to 2 TB of storage or more for larger migrations

-

Equivalent to AWS m5.4xlarge, GCP e2-standard-16, or Azure D16 v5

Whether you need additional machines depends on the total amount of data you need to migrate. All machines must meet the minimum specifications.

For example, if you have 20 TBs of existing data to migrate, you could use 4 VMs to speed up the migration. Then, you would run DSBulk or CDM in parallel on each VM with each one responsible for migrating specific tables or a portion of the data, such as 25% or 5 TB.

If you have one especially large table, such as 75% of the total data in one table, you can use multiple VMs to migrate that one table. For example, if you have 4 VMs, you can use three VMs in parallel for the large table by splitting the table’s full token range into three groups. Then, each VM migrates one group of tokens, and you use the fourth VM to migrate the remaining smaller portion of the data.

Regardless of the number of data migration machines or the amount of data you need to migrate, make sure the machines have enough space to stage data between unloading and loading during the migration.

Additionally, make sure that your origin and target clusters can handle high traffic from your chosen data migration tool in addition to the live traffic from your application.

Test migrations in a lower environment before you proceed with production migrations.

If you need assistance with your migration, contact IBM Support.

-

Connectivity requirements

|

Don’t allow external machines to directly access the ZDM Proxy machines. The jumphost is the only machine that should have direct access to the ZDM Proxy machines. |

- Core infrastructure ports

-

The ZDM Proxy machines must be reachable by the following:

-

The client application instances on port 9042

-

The jumphost on port 22

-

The machine running the monitoring stack (the jumphost or a separate machine) on port 14001

-

- Clusters

-

The ZDM Proxy machines must be able to connect to the origin and target cluster nodes.

For self-managed clusters like Cassandra and DSE, ZDM Proxy must be able to connect on the Cassandra Native Protocol port, which is typically 9042.

For Astra, you must ensure that outbound connectivity is possible over the Astra endpoint indicated in the Secure Connect Bundle (SCB). Connections over private endpoints are supported.

- Jumphost and monitoring

-

The following connectivity requirements apply to the jumphost and monitoring machine. If you use separate machines for these functions, these requirements apply to both machines.

-

Connect to ZDM Proxy instances on port 14001 for metrics collection.

-

Connect to ZDM Proxy instances on port 22 for ZDM Proxy Automation, log inspection, and troubleshooting.

-

Allow incoming, external SSH connections.

DataStax strongly recommends that you limit external access to specific IP ranges. For example, limit access to the IP ranges of your corporate networks or trusted VPNs.

-

Expose the Grafana UI on port 3000.

External connections are required so that ZDM Proxy Automation can download the necessary software packages (Docker, Prometheus, Grafana) and the ZDM Proxy image from DockerHub.

-

Connect to ZDM Proxy infrastructure from an external machine

To connect to the jumphost from an external machine, ensure that its IP address belongs to a permitted IP range. If you are connecting through a VPN that only intercepts connections to selected destinations, you might need to add a route from your VPN IP gateway to the public IP of the jumphost.

A custom SSH config file can simplify the process of connecting to the jumphost and, by extension, the ZDM Proxy instances.

To use the following template, replace all placeholders with the appropriate values for your deployment.

Add more entries if you have more than three proxy instances.

Save the file with any name you prefer, such as zdm_ssh_config.

Host JUMPHOST_PRIVATE_IP_ADDRESS jumphost

Hostname JUMPHOST_PUBLIC_IP_ADDRESS

Port 22

Host PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_0 zdm-proxy-0

Hostname PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_0

ProxyJump jumphost

Host PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_1 zdm-proxy-1

Hostname PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_1

ProxyJump jumphost

Host PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_2 zdm-proxy-2

Hostname PRIVATE_IP_ADDRESS_OF_PROXY_INSTANCE_2

ProxyJump jumphost

Host *

User LINUX_USER

IdentityFile ABSOLUTE PATH WITH FILENAME FOR LOCALLY GENERATED KEY PAIR LIKE ~/.ssh/zdm-key-XXX

IdentitiesOnly yes

StrictHostKeyChecking no

GlobalKnownHostsFile /dev/null

UserKnownHostsFile /dev/nullThen, use the SSH config file to connect to your jumphost:

ssh -F zdm_ssh_config jumphostConnect to a ZDM Proxy instance by specifying the corresponding instance number in your config file:

ssh -F zdm_ssh_config zdm-proxy-0Next steps

Next, prepare the target cluster for your migration.