Restore a backup to a specific point in time

For a point-in-time restore, OpsCenter intelligently chooses which snapshots and commit logs to restore from based on the date and time you are restoring the cluster to. If an acceptable combination of snapshots and commit logs cannot be found, the restore fails. A detailed error message is visible in the Activity section of the OpsCenter UI.

|

The Backup Service requires control over the data and structure of its destination locations. The backup destinations must be dedicated for use only by OpsCenter. Any additional directories or files in those destinations can prevent the Backup Service from properly conducting a Backup or Restore operation. |

|

Keep the following caveats in mind when creating and restoring backups:

|

Prerequisites

For point-in-time restores to work, you must enable commit log backups and complete at least one snapshot backup before the time to which you are restoring.

|

When performing a point-in-time restore, the cluster topology must not have changed since the backup. Attempting to perform a point-in-time restore on a cluster whose topology has changed will fail. DataStax strongly recommends performing a snapshot backup both before and after changing the cluster topology. After changing the topology, you can then restore the cluster based on that backup. If reverting to the previous topology, you can use the backup with the original topology to restore the cluster. |

Known limitations

-

Point-in-time restore fails if any tables were re-created during the time period of the actual point-in-time restore.

-

For point-in-time restores, you cannot choose a different cluster because the commitlog cannot be played on a different node other than the one it was recorded.

Procedure

-

Select cluster name > Services.

-

Select the Details link for the Backup Service.

-

Click Restore Backup.

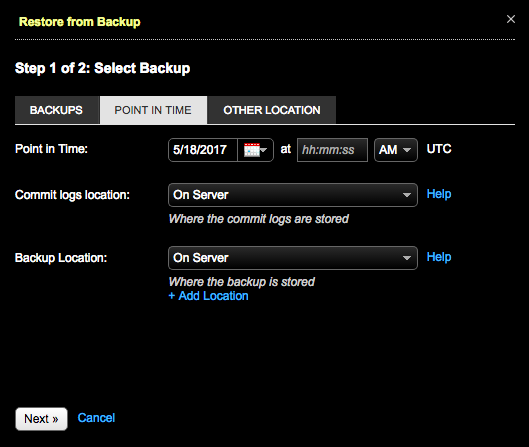

The Restore from Backup, Step 1 of 2: Select Backup dialog appears.

-

Click the Point In Time tab.

-

Complete your selections:

-

Required: Point in Time: Set the date and time to which you want to restore your data.

-

Required: Commit logs location: Select the location of the commit logs; either On Server, Local FS, or in a supported cloud. The location of commit logs is configured when enabling commit log backups.

-

Required: Backup location: Select the location of the snapshot; either On Server, Local FS, or in a supported cloud. Click the +Add Location link to add another location.

-

Click Next.

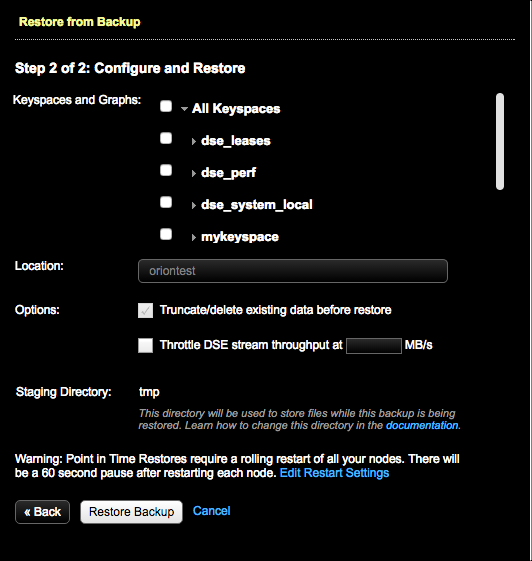

The Restore from Backup, Step 2 of 2: Configure and Restore dialog appears.

-

-

In Keyspaces and Graphs, select the tables or graphs you want to restore.

-

Click All Keyspaces or All Graphs to restore all keyspaces or all graphs.

-

Click the keyspace name or graph name to include all tables or all graphs in the keyspace.

-

To select specific tables or graphs, expand the keyspace name or graph name and select the specific tables or graphs to back up.

For DSE 6.0.0-6.0.4, when restoring a DSE Graph backup without selecting Use sstableloader, DSE must be restarted to ensure all data is available.

-

-

Optional: To remove the existing keyspace data before the data is restored, select Truncate/delete existing data before restore. This completely removes any updated data in the cluster for the keyspaces you are restoring.

Choosing to not truncate or delete existing data can be selected only when sstableloader is used for the restore. If not using sstableloader, truncating or deleting existing data is selected by default.

-

To prevent overloading the network, set a maximum transfer rate for the restore using the Throttle DSE stream throughput at __ MB option.

To enter a value for this option, you must select Use sstableloader. Otherwise, the transfer rate value is ignored.

-

Optional: To bulk load external data into a cluster, select Use sstableloader.

Using sstableloader restores data to a cluster regardless of its original topology, at the expense of speed and performance. Restoring to a cluster that uses tiered storage requires using sstableloader. See sstableloader for details.

When restoring from a backup, OpsCenter might report that the restore was successful, even if it failed. This behavior can occur when copying SSTables into the new data directories and running

nodetool refreshafterward. To determine whether the restore failed, search the DataStax Agent logs for a string indicating"Error copying sstables to data dir:". This issue is present only in OpsCenter 6.8.0. -

Optional: If necessary, change the staging directory by setting the

backup_staging_directoryconfiguration option inaddress.yaml. -

Optional: Click the Edit Restart Settings link to adjust settings for the rolling restart. The default is a 60 second pause after restarting each node. Point-in-Time restores require a rolling restart of all nodes.

-

Click Restore Backup.

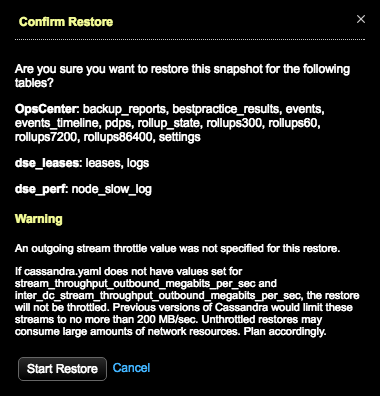

The Confirm Restore dialog appears.

If a value was not set for throttling stream output, a warning message indicates the consequences of unthrottled restores. Take one of the following actions:

-

Click Cancel and set the throttle value in the Restore from Backup dialog.

-

Set the

stream_throughput_outbound_megabits_per_secandinter_dc_stream_throughput_outbound_megabits_per_secvalues incassandra.yaml. -

Proceed anyway at the risk of creating network bottlenecks.

If using LCM to manage DSE cluster configuration, update Cluster Communication settings in

cassandra.yamlin the configuration profile for the cluster and run a configuration job. Stream throughput (not inter-dc) is set to 200 in LCM defaults.

-

-

Click Start Restore to confirm when prompted.

Results

OpsCenter retrieves the backup data and sends the data to the nodes in the cluster. A snapshot restore is completed first, following the same process as a normal snapshot restore. After the snapshot restore successfully completes, OpsCenter instructs all agents in parallel to download the necessary commit logs, followed by a rolling commit log replay across the cluster. Each node is configured for replay and restarted after the previous node finishes successfully.

If an error occurs during a point-in-time restore for a subset of tables, you might need to manually revert changes made to some cluster nodes.

To clean up a node, locate and edit dse-env.sh and remove the last line that specifies JVM_OPTS.

The default location of this file depends on the type of installation:

-

Package installations:

/etc/dse/dse-env.sh -

Tarball installations:

INSTALL_DIRECTORY/bin/dse-env.sh

For example, remove:

export JVM_OPTS="$JVM_OPTS -Dcassandra.replayList=Keyspace1.Standard1"